Project update 3 of 13

A GNU Parallel Clustering Demo

In this update, we’re going to take a look at harnessing the power of the Circumference C25 in the simplest way possible: using GNU Parallel.

At its heart, GNU Parallel is a simple tool for running multiple jobs in parallel. Unlike traditional multi-threading, it doesn’t require that the software you’re running is written and compiled with multiple processor cores in mind; it doesn’t require any modification to your existing software at all, in fact.

For this demo, we’ll be running Google’s Guetzli perceptual JPEG encoder across a directory with 50 images in it in order to reduce their file sizes without any perceptible loss of quality. Guetzli is a very processor- and memory-intensive tool, and one which has been written to run in a single thread. To start:

for i in *jpg; do guetzli \\$i \\$i-compressed.jpg; done

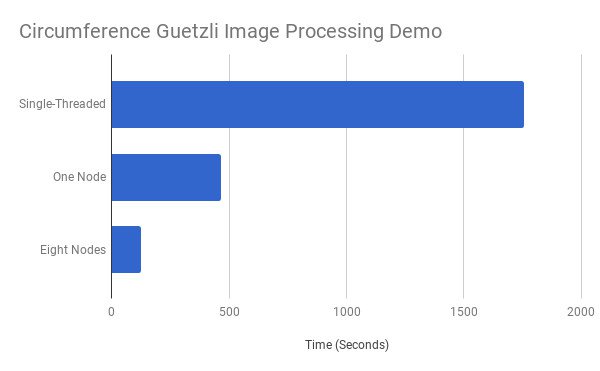

This command runs Guetzli on all JPEG images in the current directory in turn, writing them out with a -compressed.jpg suffix. On the sample directory, running on a single Raspberry Pi 3 Model B, this takes quite a while: 1,755 seconds, all told.

GNU Parallel, at its simplest, lets you turn the highly-serial Guetzli job into a highly-parallel equivalent. After cleaning up the images from the first run - as simple as rm *-compressed.jpg - we can run the same task parallelised:

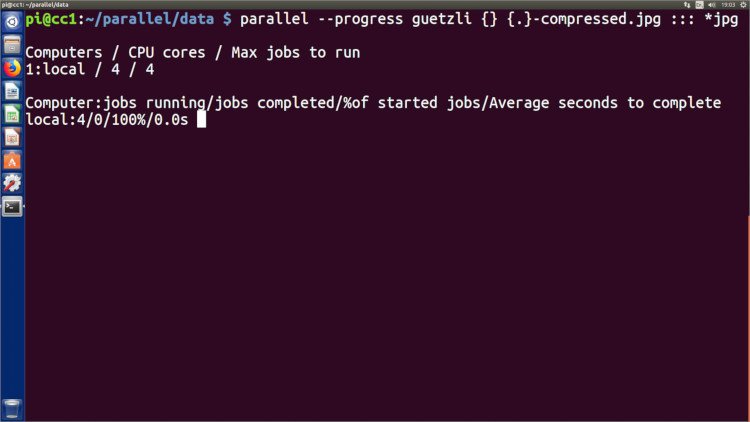

parallel --progress guetzli {} {.}-compressed.jpg ::: *jpg

Instead of running the 50 tasks in a serial fashion, GNU Parallel automatically runs as many jobs are there are available processor cores - four, in the case of the Raspberry Pi 3 Model B. The result is an immediate performance boost: the job is now complete in just 465 seconds.

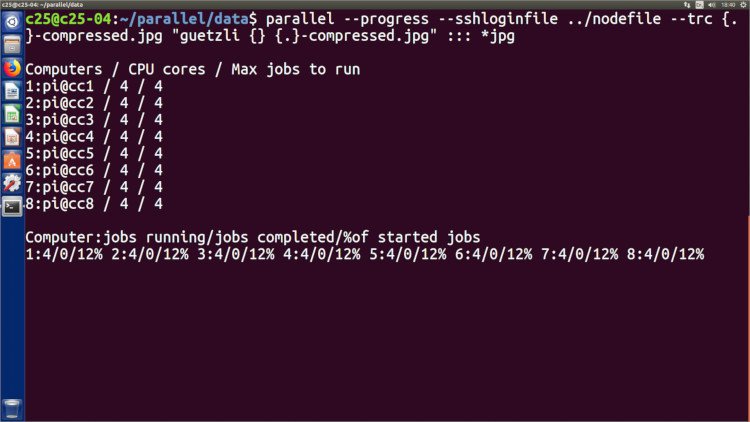

With a C25, though, we don’t have just one quad-core Raspberry Pi at your disposal; we have eight of them. GNU Parallel can take immediate advantage of those. GNU Parallel can use a nodefile as a list of remote nodes in a cluster, logging into each with ssh and copying any files that are being processed to and from using rsync. After cleaning the files and creating a nodefile, we can run the same job again, but this time take advantage of every node in the cluster:

parallel --progress --sshloginfile nodefile --trc {.}-compressed.jpg guetzli {} {.}-compressed.jpg ::: *jpg

The difference is stark: the job which took Guetzli 1,754 seconds on its own and 465 seconds when parallelised across all four cores of a single node takes just 125 seconds when spread across the cluster - and without needing to modify Guetzli in any way.

It’s not a linear gain, of course: for GNU Parallel to farm the job out to the cluster it has to first copy the file to be processed out to the remote node then copy the resulting output back to the FEP. Using small and relatively quickly-processed files, as in this example, means this overhead eats into the potential performance gain - as does the 100 Mb/s network port of the Raspberry Pi 3 Model B, which can be improved by a rough factor of three by switching the nodes to use the newer and faster Raspberry Pi 3 Model B+ with its gigabit-compatible Ethernet port.

While you still have to think carefully about how to get the best performance for your workload, GNU Parallel shows just how easy it can be to get started with cluster computing on a Circumference C25 or larger C100.

-Gareth and the Circumference Team