We’ve been hard at work adding support for various network architectures through our Python API.

Object Detector Models

First up, now working, are most/all object detectors. We now have 6x fully tested object detector models (and more are likely to ‘just work’):

- PASCAL VOC 20-class

- Pedestrian and Vehicle Detector - ADAS*

- Pedestrian Detection - ADAS

- Person Detection - Retail

- Vehicle Detection - ADAS

- Vehicle License Plate Detection

*ADAS = Advanced Driver Assistance System

These are all now working through our DepthAI Python API, available on our Github. Converting your own object detection models to run on DepthAI is also now possible, via the simple one-line conversion.

Neural Models

We have also been able to successfully run the following neural model types, and are now working to integrate parsing of these models’ output formats in Python via the DepthAI API.

- Object and Action Recognition

- Human Pose Estimation

- Image Processing (e.g. style transfer)

- Re-identification (e.g. recognizing person A from two separate/disparate camera feeds)

- Text Recognition

In all of these cases, we have tested to find these to run properly through initial/prototype parsing/display of the results from DepthAI. We’re now cleaning up these and writing the example Python code to parse the output these types of models. These cleaned up examples will be added to our Github when complete.

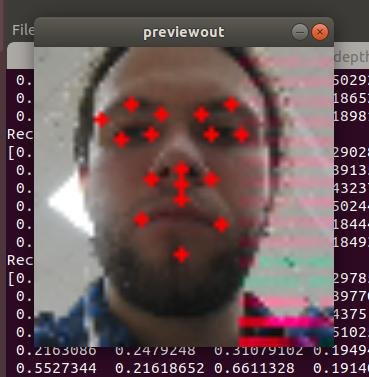

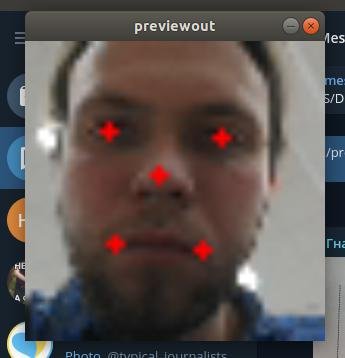

Below is an example trying out facial landmark detection:

35-marker model:

5-marker model:

Cheers,

Brandon & the Luxonis team