Project update 2 of 3

Magic Wand Demo

In this tutorial, our partners at QuickLogic & SensiML build a Magic Wand application for detecting spells that can run entirely on the SparkFun Thing Plus - QuickLogic EOS S3 using SensiML Analytics Toolkit.

Enjoy!

The SparkFun Team

Overview

In this tutorial, we are going to build a magic wand which can recognize different spell incantations in real time using the SparkFun Thing Plus - QuickLogic EOS S3 microcontroller and SensiML Analytics Toolkit and TensorFlow Lite for Microcontrollers.

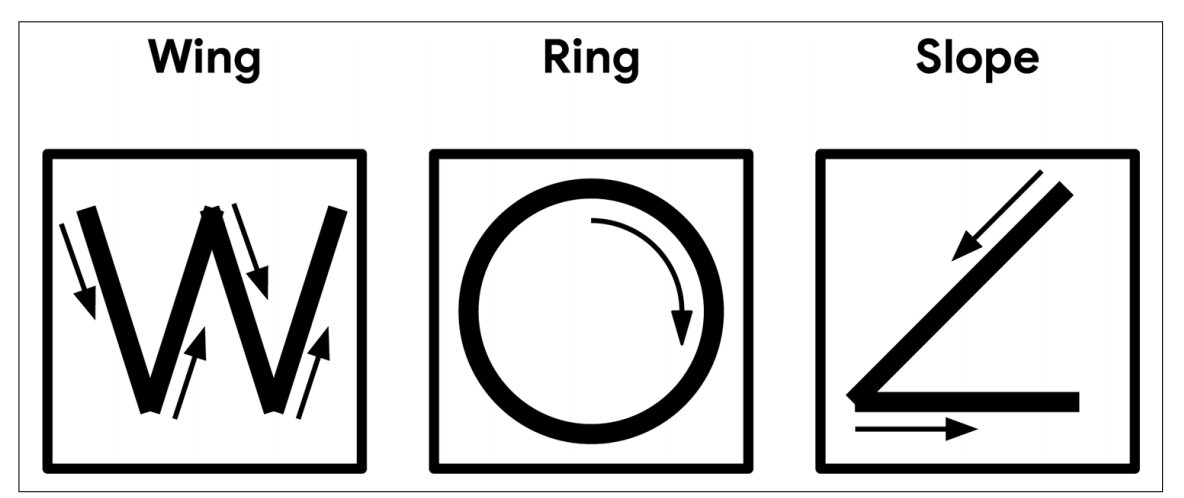

This demo implements the Magic Wand Demo developed for showcasing TensorFlow Lite for Microcontrollers. We will teach our device to recognize three gestures.

- Wing: Start from the upper left corner and carefully trace the letter "W" for two seconds.

- Ring: Start upright, move the wand in a clockwise circle for one second.

- Slope: Start by holding the wand facing upward, with the LEDs toward you. Move the wand down at an incline to the left and then horizontally to the right for one second.

This tutorial focuses on gesture recognition, but these technologies apply to a variety of tinyML applications such as predictive maintenance, activity recognition, sound classification, and keyword spotting.

Objective

- Demonstrate how to collect and annotate a dataset of gestures using SensiML Data Capture Lab

- Build a data pipeline to extract features in real-time for the SparkFun Thing Plus - QuickLogic EOS S3

- Train a Classification model using TensorFlow

- Convert the model into a Knowledge Pack and flash it to the SparkFun Thing Plus - QuickLogic EOS S3

- Perform live validation of the Knowledge Pack running on-device using the SensiML Streaming Gateway

Building a Data Set

For every machine learning project, the quality of the final product depends on the quality of your curated data set. Time series sensor data, unlike image and audio, are often unique to the application as the combination of sensor placement, sensor type, and event type greatly affects the data created. Because of this, you will be unlikely to have a relevant dataset already available, meaning you will need to collect and annotate your dataset.

We are going to use the SensiML Data Capture Lab to collect and annotate data for the different gestures. We have created a template for this project which will get you started. The project has been pre-populated with the labels and metadata information, along with some pre-recorded examples. To add this project to your account:

- Download and unzip the SparkFun Thing Plus - QuickLogic EOS S3 - Magic Wand Demo Project.

- Open the SensiML Data Capture Lab

- Click the Upload Project button

- Click the Browse button

- Navigate to the SparkFun Thing Plus - QuickLogic EOS S3 - Magic Wand Demo folder and select the SparkFun Thing Plus - QuickLogic EOS S3 - Magic Wand Demo.dclproj file

- Click Upload

The project will be synced to your SensiML account. In the next few sections, we are going to walk through how we used the Data Capture Lab to collect and label this dataset.

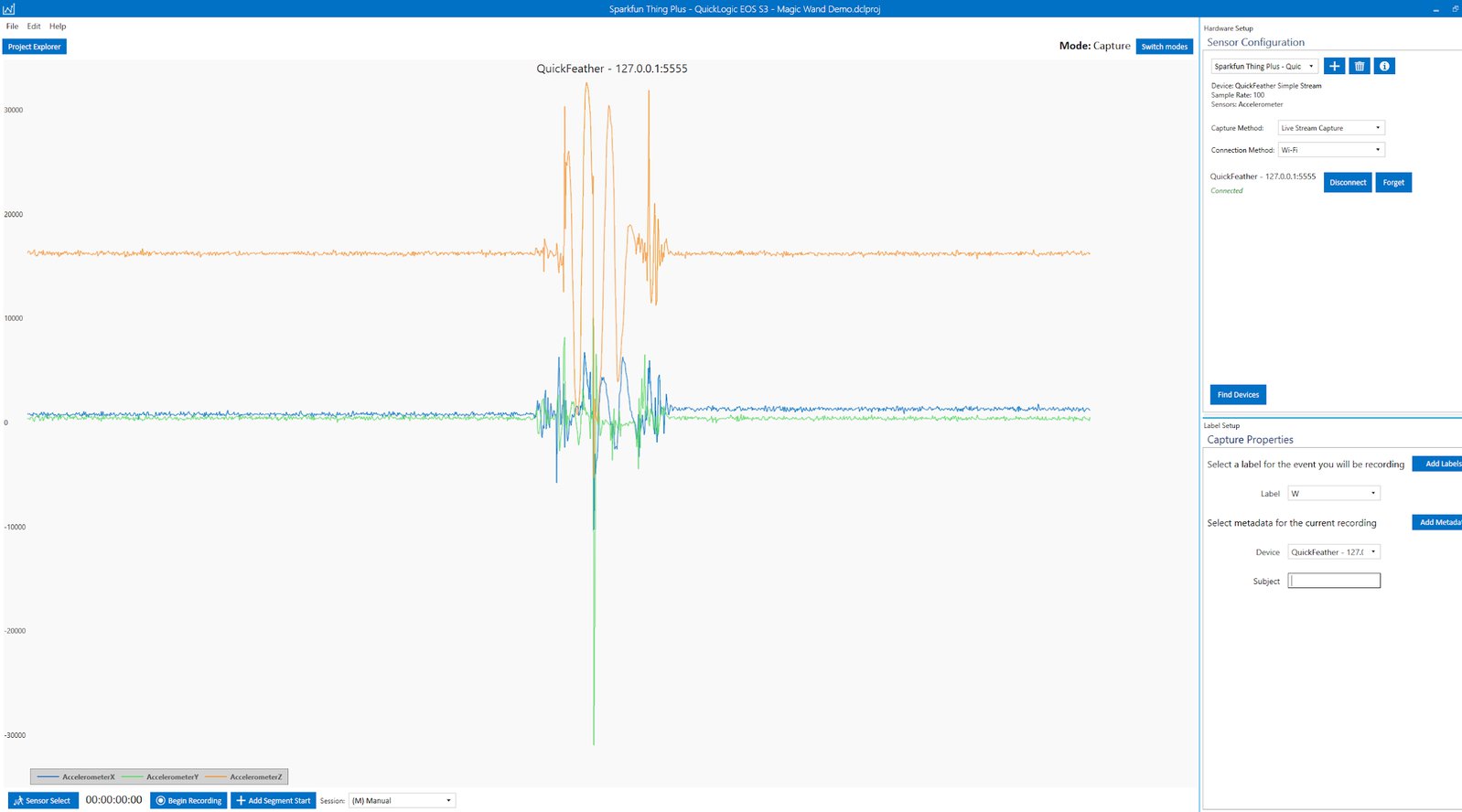

Streaming Sensor Data

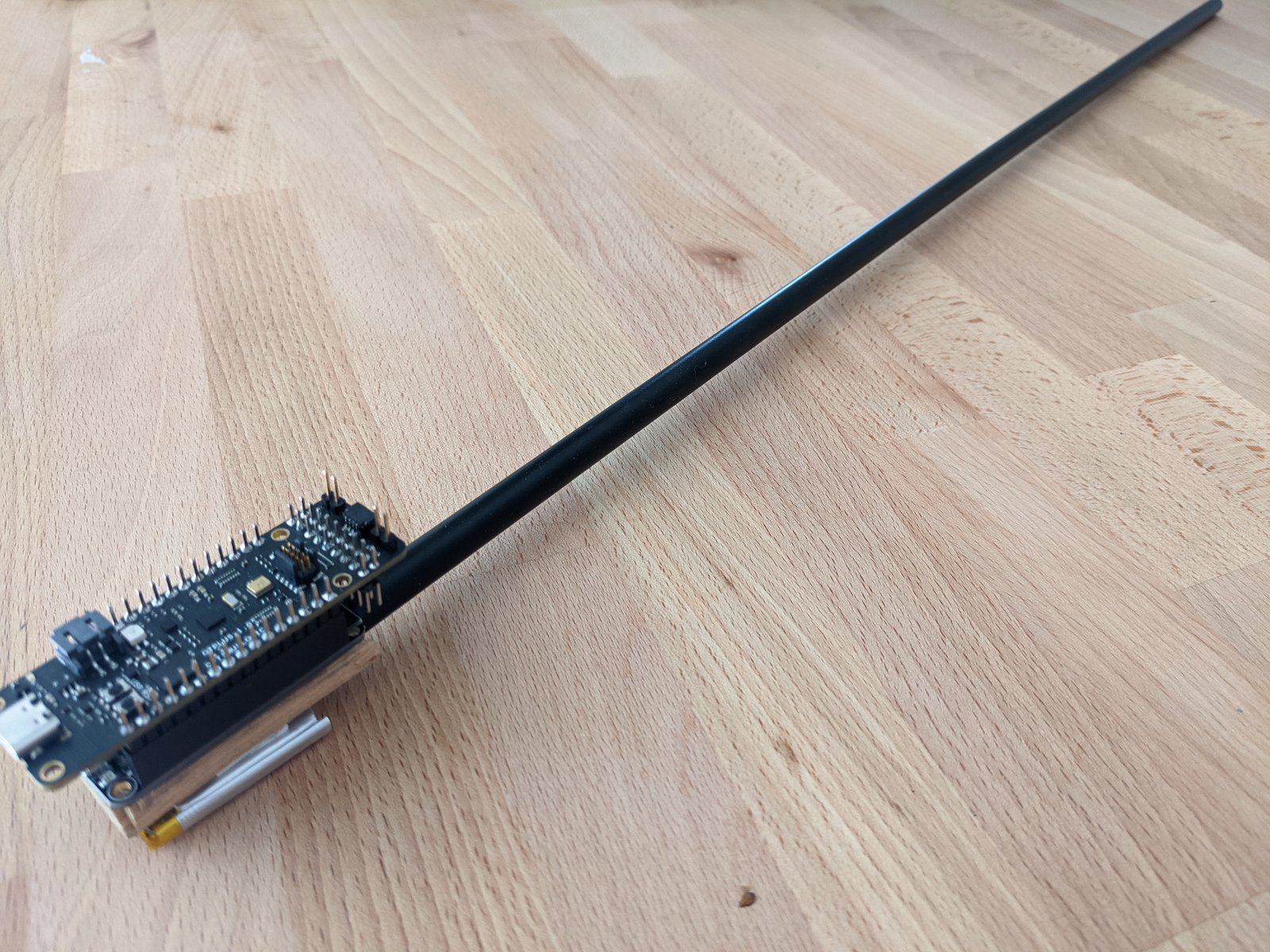

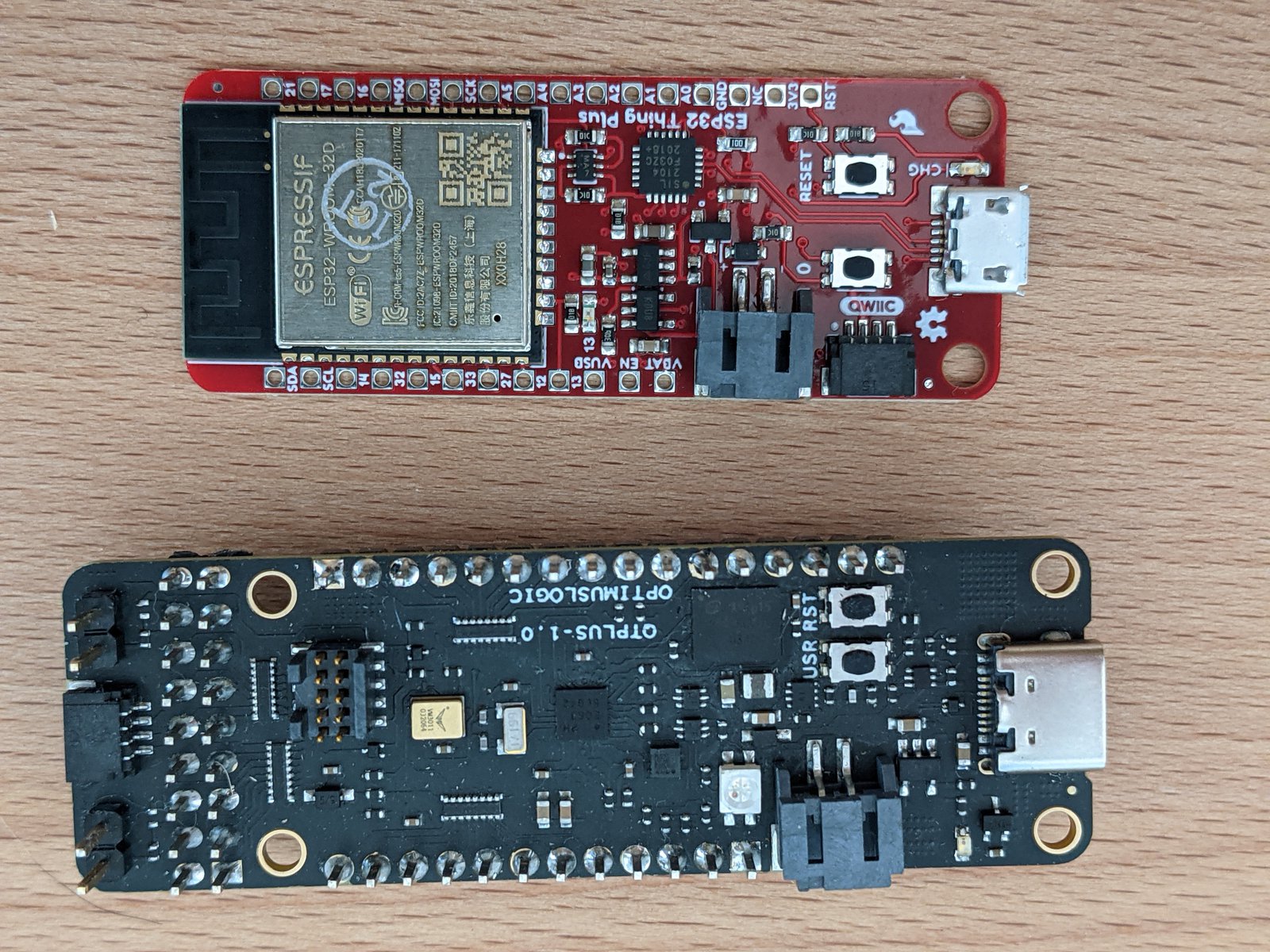

To enable wireless data capture, we are going to attach the SparkFun Thing Plus - ESP32 WROOM with this data streaming firmware to stream the IMU sensor data over Wi-Fi to the Data Capture Lab. If you don’t have the ESP32 available, you can use the serial data capture over USB as well. We have configured this sensor to capture IMU Accelerometer data at a sample rate of 100 Hz.

To set up the hardware for data collection:

- Flash the IMU Data Collection firmware to your SparkFun Thing Plus - QuickLogic EOS S3

- Flash the Wi-Fi Data Streaming firmware to the SparkFun Thing Plus - ESP32 WROOM

- Plug a Li-Poly battery into the SparkFun Thing Plus - ESP32 WROOM for wireless power

- Stack the two boards and reset them

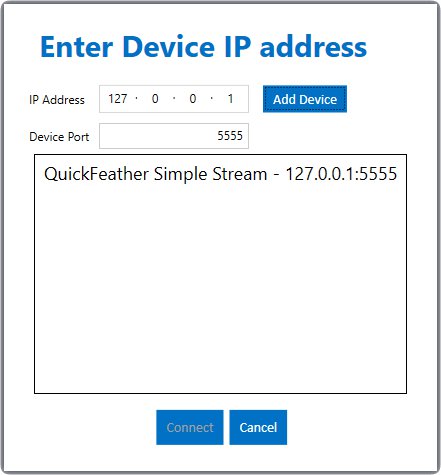

To capture data in the Data Capture Lab over Wi-Fi:

- Go to the Capture mode in the Data Capture Lab

- Make sure the SparkFun Thing Plus - QuickLogic EOS S3 Sensor capture configuration is selected

- Click the Find Devices button

- Enter the IP address and Port of the ESP32 (see the README for how to find this.)

- Click the Add Device button

- Click the Connect button

You should now see the Accelerometer data streaming into the Data Capture Lab. See the documentation for detailed instructions for Wi-Fi streaming.

Recording Sensor Data

You should now see a live stream of the sensor data. Before you record data select the appropriate label and metadata for this capture. Maintaining good records of sensor metadata is critical to building a good machine learning model.

To start recording sensor data, hit begin record. When you hit stop recording, the sensor data will be saved locally to your machine as well as synced with the SensiML Cloud project.

Along with sensor data, it is also useful to capture video data to help us during the data annotation process. We record the video with our webcam and will sync it to the sensor data when we start labeling the dataset.

Annotating Sensor Data

Now that we have captured enough sensor data, the next step is to add labels to the dataset to build out our ground truth. To add a label to your data:

- Open the Project Explorer tab and double-click one of the captures that you collected.

- Once the capture file opens, right-click on the graph. This will place a blue and red bar. The blue bar is the start of the segment, the red bar is the end.

- Move the start and ends of the segment to cover an entire region of the Fan state.

- With the segment still selected, Click (ctr+e) or the Edit Label button and select the label that is associated with the sensor data.

You have now labeled a region of the capture file which we can use when building our model. The video below walks through how to label the events of a captured file in the SensiML Data Capture Lab.

Building a model

We are going to use Google Colab to train our machine learning model using the data we collected from the SparkFun Thing Plus - QuickLogic EOS S3 in the previous section. Colab provides a Jupyter notebook that allows us to run our TensorFlow training in a web browser. Open the notebook here and follow along to train your own magic spell detection model.

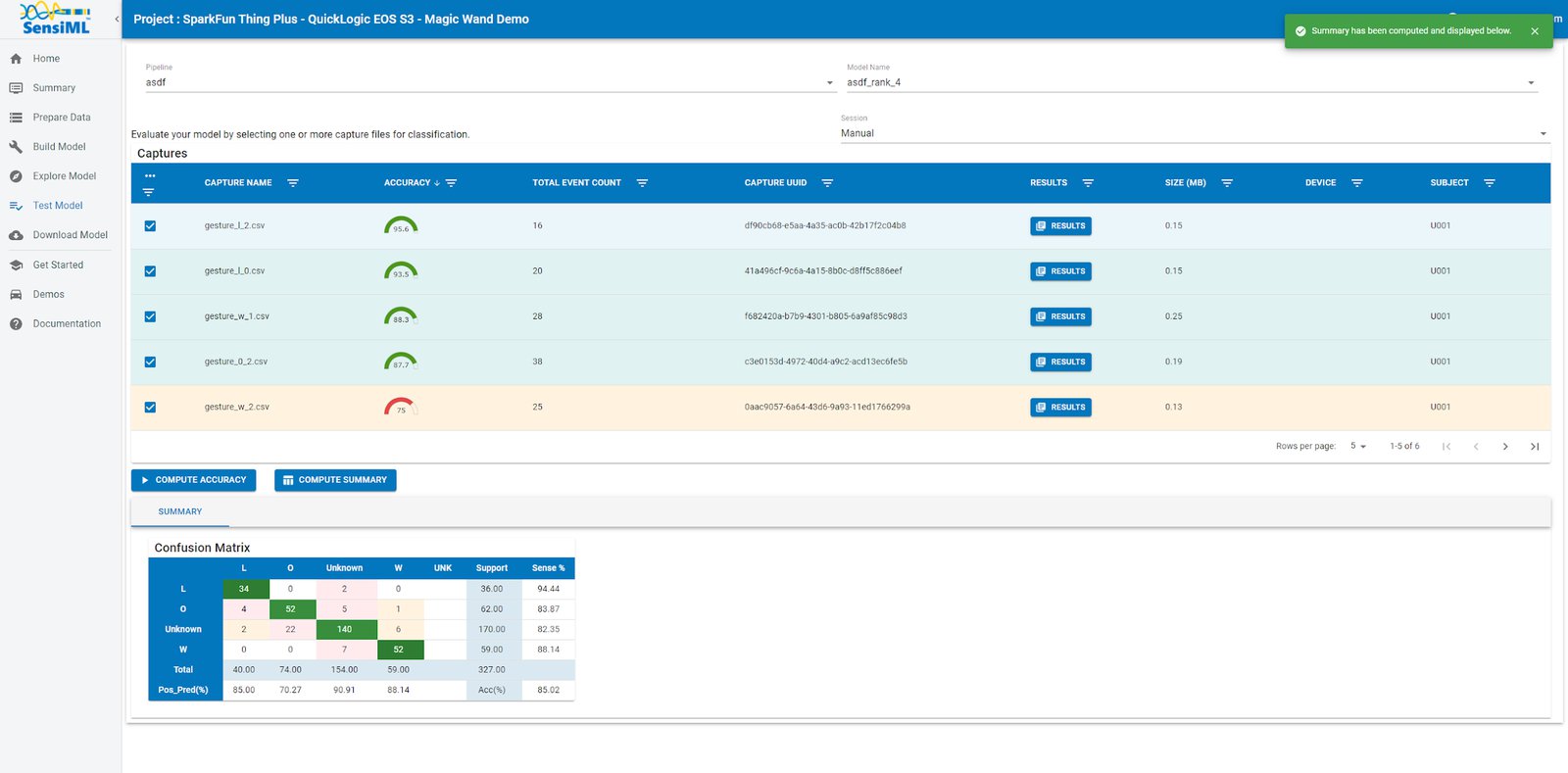

Offline model validation

For the next part of the tutorial, you will need to log into Analytic Studio, where we will validate and download the model that can be flashed to the device.

After you log into the Analytic Studio, click on your project to set it as the active project. Then, go to the Test Model tab. You can test against any of the captured data files as follows:

- Select the pipeline

- Select the model you want to test

- Select any of the capture files in the Project

- Click the Compute Accuracy button to classify that capture using the selected model.

The model is compiled in the SensiML Cloud and the capture files are passed through it to emulate the edge device classifications against the selected sensor data

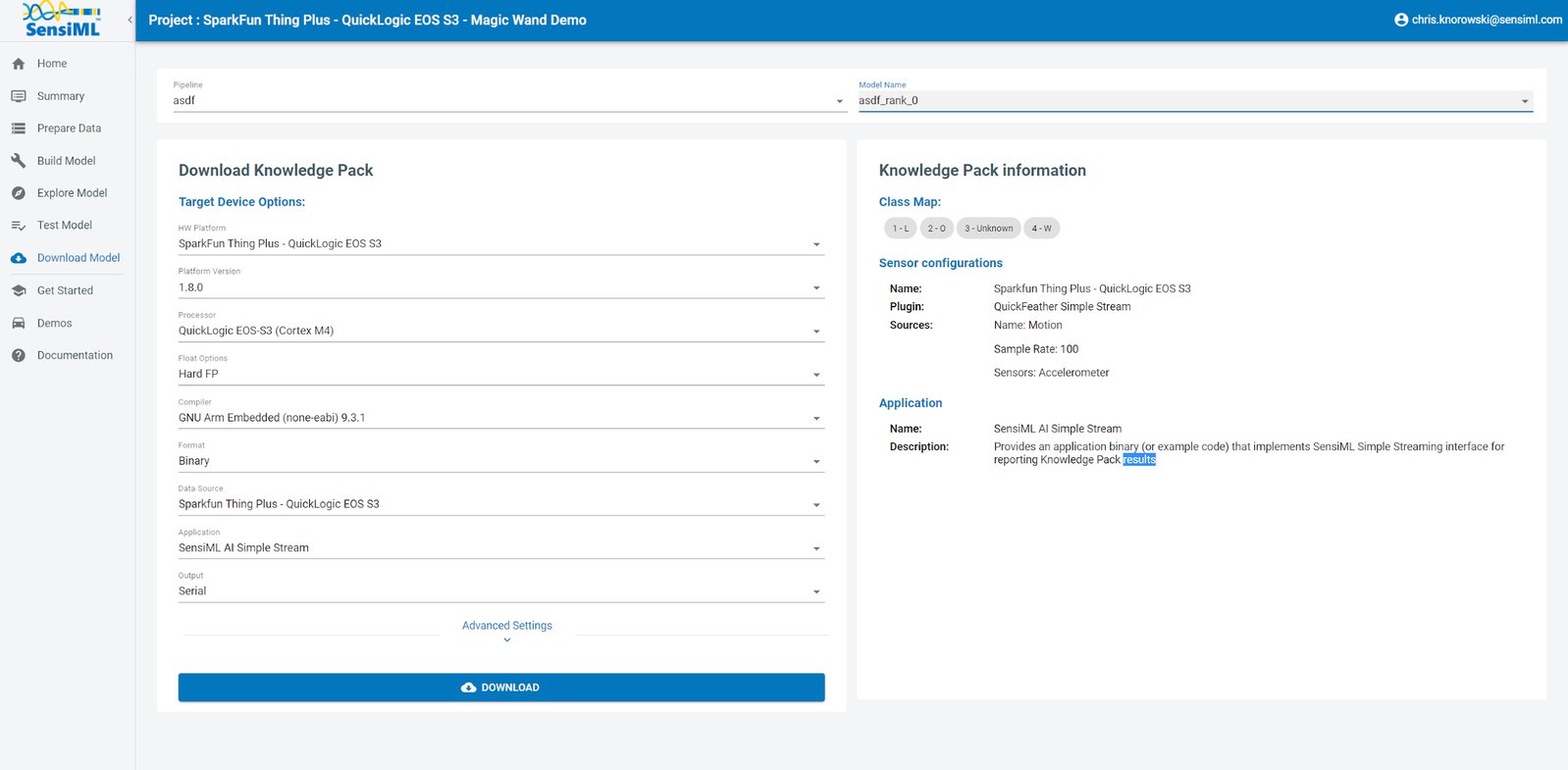

Downloading the Knowledge Pack

Now that we have validated our model, it is time for a live test. To build the firmware for your specific device, go to the Download Model tab of the Analytic Studio. To download the firmware for this tutorial:

- Go to the Download Model tab of the Analytic Studio

- Select the pipeline and model you want to download

- Select the HW platform SparkFun Thing Plus - QuickLogic EOS S3

- Select Format Binary

- Click Download and the model will be compiled and downloaded to your computer.

- Unzip the downloaded file and flash it to your device

NOTE: Instructions for flashing are found in the QORC SDK or here

Live Test Using the SensiML Gateway

Being able to rapidly iterate on your model is critical when developing an application that uses machine learning. To facilitate validating in the field, we provide the SensiML Open Gateway. The Gateway allows you to connect to your microcontroller over Serial, Bluetooth, or TCP/IP and see the classification results live as they are generated by the Knowledge Pack running on the microcontroller. Documentation on how to use the Open Gateway can be found here. The following clip shows the SparkFun Thing Plus - QuickLogic EOS S3 recognizing magic incantations in real time.

Summary

We hope you enjoyed this tutorial using the SensiML Analytics Toolkit. In this tutorial we have covered how to:

- Collect and annotate a high-quality data set

- Build a query as input to your model

- Use SensiML and TensorFlow to build an edge optimized machine learning model

- Use the SensiML Analytic Studio to test the model offline and generate the firmware for your device

- Use the SensiML Streaming Gateway to view results from the model running on the device inreal-time

For more information about SensiML, visit https://sensiml.com. If you want our help to build your own application, please get in touch.

About QuickLogic & SensiML

QuickLogic is a fabless semiconductor company that develops low power, multi-core MCU, FPGAs, and embedded FPGA Intellectual Property (IP), voice, and sensor processing. The Analytics Toolkit from our subsidiary, SensiML Corporation, completes the end-to-end solution by using AI technology to provide a full development platform spanning data collection, labeling, algorithm and firmware auto-generation, and testing. The full range of solutions is underpinned by open source hardware and software tools to enable the practical and efficient adoption of AI, voice, and sensor processing across mobile, wearable, hearable, consumer, industrial, edge, and endpoint IoT.