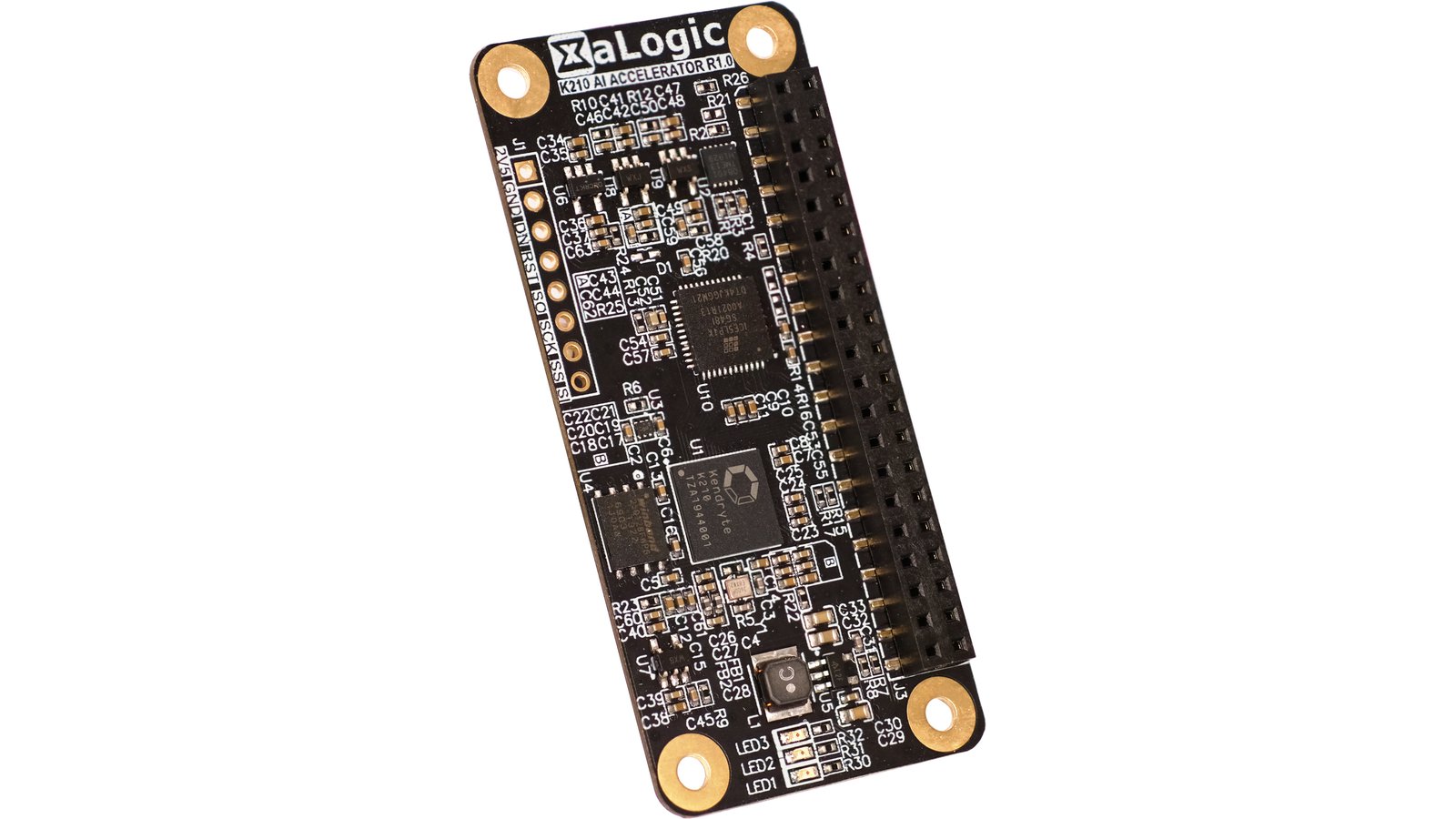

K210 AI Accelerator is a compact Raspberry Pi HAT that uses the the Kendryte K210 AI processor to provide 0.5 TOPs (Tera Operations Per Second) of processing power. Using one of our many free pre-trained models, you can add machine vision features using deep learning to your RPi-based camera in a matter of minutes — skipping the tedious flow of training your own neural networks.

This handy HAT lets you add AI features to your RPi based camera even if you don’t know how to train your model. Our plugin module, together with pre-trained models, will make your camera AI-enabled in minutes with a few Python API calls.

Pre-trained models are currently available for:

Potential future pre-trained models:

We try to make your life easier by providing free models, but that should not stop you from developing your own.

To train your own model, you would need a seprate computer, preferably with an Nvidia GPU. We predominantly use TensorFlow and will provide an exmaple how to train your own object detection model. The Kendryte KModel conversion tool supports TFLite, Caffe, and limited support of ONNX format.

Using the familiar Visual Studio Code for Raspberry Pi and the necessary toolchain for K210, you can develop all the K210 firmware on the Pi itself.

The K210 AI Accelerator has a Infineon Trust-M onboard. This lets you establish a secure connection to AWS through MQTT without exposing the private key. This is important when you are deploying your IoT devices in the field.

Schematics, C code in the K210, and all code running on the Raspberry Pi will be open sourced. Pre-trained models are provided in binary form. Also, sample Caffe and Tensorflow projects are available to help you create your own custom neural network.

GitHub: https://github.com/xalogic-open

Discord (chat/discussion): https://discord.gg/94S3KrJ87Z

| K210 AI Accelerator | Coral USB Accelerator | Intel Neural Compute Stick 2 | |

|---|---|---|---|

| Manufacturer | XaLogic | Coral | Intel |

| Chipset | Kendryte K210 | Google Edge TPU | Intel® Movidius™ Myriad™ X |

| Connectivity | SPI¹ | USB | USB |

| Form-factor | Raspberry Pi Zero HAT | USB dongle | USB dongle |

| NPU performance | 0.5 TOPS | 4 TOPS | 4/1 TOPS² |

| Power consumption | 0.3 W | 2 W | 1.5 W |

| Security IC | On-board Infineon Trust-M chipset | None | None |

| Open source (schematic and firmware) | Yes | No | No |

| Pre-trained model | Yes | Yes | Yes |

| Build application in minutes | Yes³ | Depends | Depends |

| Price | $38 | $59.99 | $68.95 |

¹ SPI clock speed of 40 Mhz.

² Intel Movidius Myraid X VPU produces 4 TOPS performance together with a Neural Compute Engine capable of 1 TOPS.

³ We create a library to help you build your applications in minutes with little to no knowledge of running ML models. The other solutions involve more of a learning curve.

K210 AI Accelerator will be manufactured in China by our partner who has manufactured our past products, including the first generation of this product, the XAPIZ3500. We have done a trial run of a small batch to iron out any manufacturing issues.

Once the boards are manufactured, they will be tested in the factory and shipped to Crowd Supply. Crowd Supply will then fulfill your pledges via their logistics partner, Mouser Electronics. For more information, you can refer to this useful guide to ordering, paying, and shipping. You can confirm and update your order details and more in your Crowd Supply account.

Hardware quality and production risks have been mitigated as much as possible for K210 AI Accelerator. The design itself is stable, the first batch has been tested, had several early beta testers have successfully run applications on it. While the supply issues due to COVID-19 are manageable, we continue to be mindful when planning component purchases due to long lead time for some components. Should anything occur that would shift shipping timelines, backers will be informed via project updates.

"You can run neural networks on your Raspberry Pi, but they’re demanding and can suck up a lot of your processor time – especially if you’re using a Raspberry Pi Zero. This HAT lets you offload some of the processing..."

Produced by XaLogic in Singapore.

Sold and shipped by Crowd Supply.

One Raspberry Pi HAT powered by the Kendryte K210 AI Processor

A 40-pin extender for using a Raspberry Pi 3B+ or 4B model - due to POE header obstruction

XaLogic is a start-up based in Singapore with the goal of bringing AI capabilities to the Edge, enabling a smarter IOT ecosystem. XaLogic is a partner of Infineon Technologies Co-Innovation Space which supports start-ups in viable semiconductor solutions development and fast-tracks their product prototype.

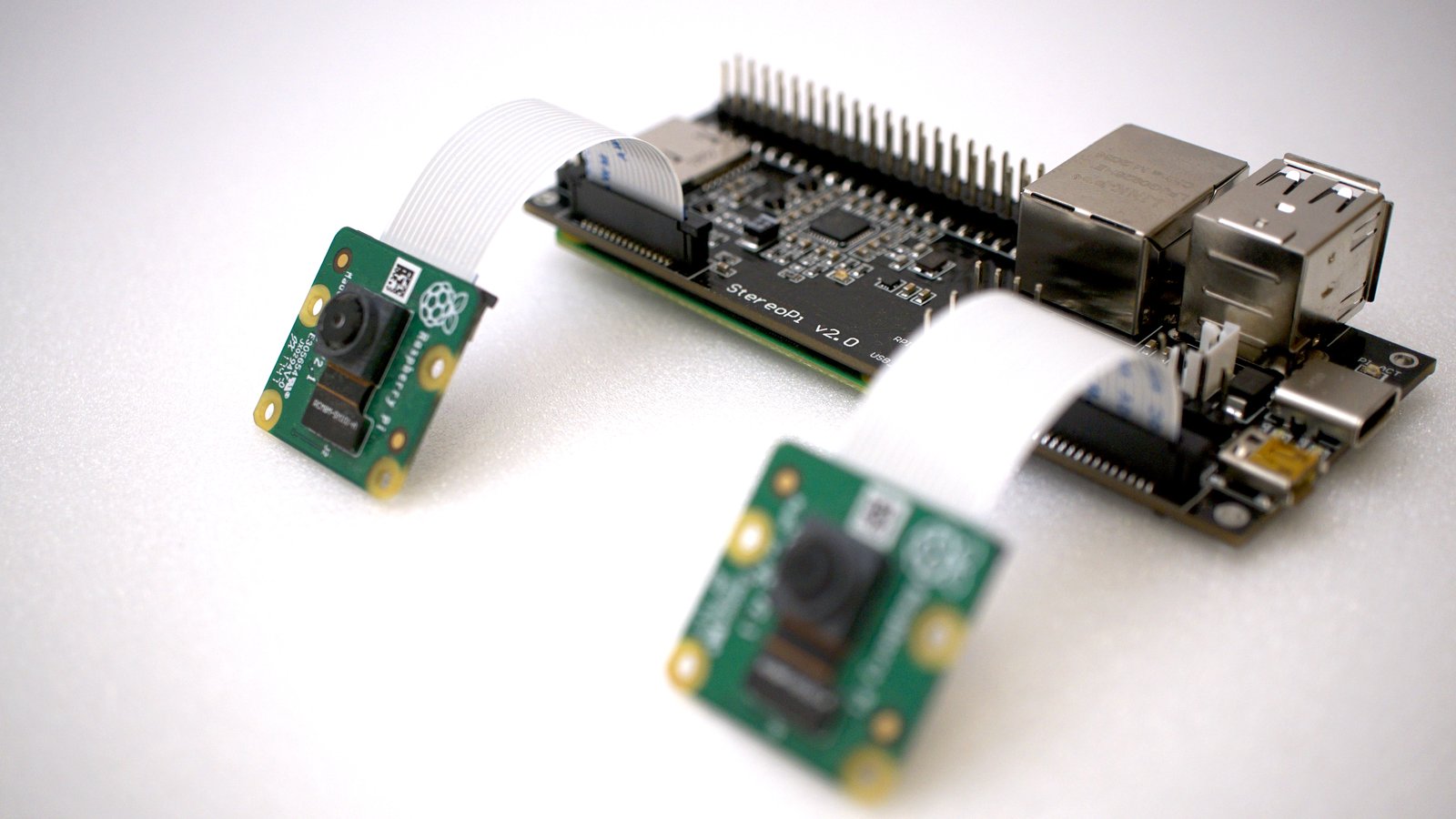

The open-source stereoscopic camera based on Raspberry Pi with Wi-Fi, Bluetooth, and an advanced powering system

Classic NES games on open source hardware that fits in the palm of your hand

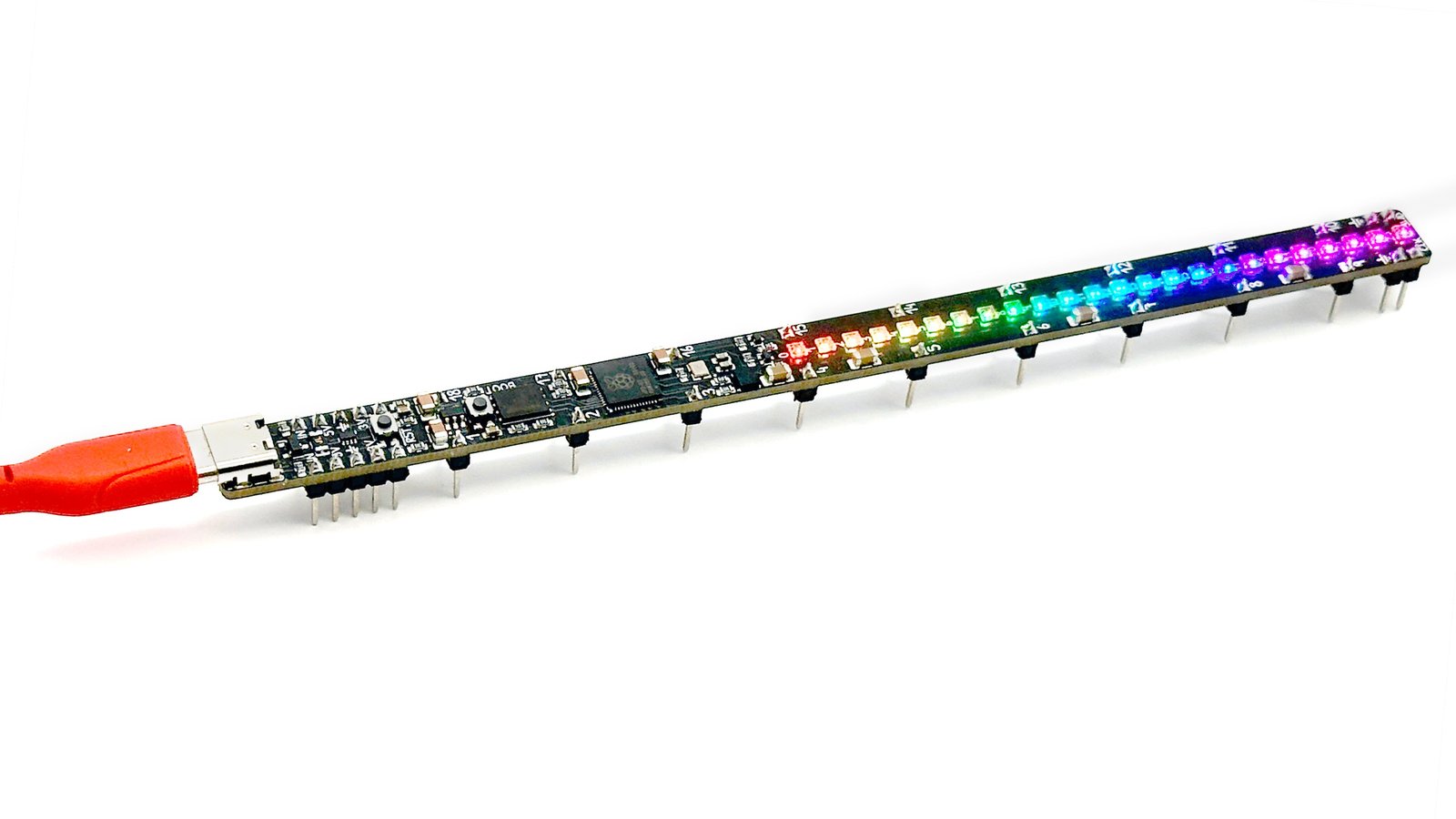

A long, lean, delicious development board with a unique form factor and high-quality components